Object Detection

Main functionalities

The object detection module is used to perceive the changing environment and modify systems actions accordingly. The module receives color frames and depth information from a camera sensor and returns information about objects to the robot control. The camera sensor could be placed above the pile of objects as well as at the end-effector of the robot manipulator. The object detection module is mainly responsible for the detection of objects that can be picked by an industrial robot and estimation of object pose.

Technical specifications

Object detection module uses an RGBD camera to detect and estimate the pose of an object. The object detection is done using the RGB frame and the depth frame is used to determine the object pick-up position. This module allows one to choose between two detection approaches – a handcrafted detector and a learned convolutional neural network-based detector (YOLO architecture).

The handcrafted approach uses segmentation algorithms (Canny Edge Detector, Selective Search, Watershed Segmentation) to divide input images into candidate regions. The parameters of candidates are compared with preselected or automatically determined values corresponding to the objects of interest (e.g. height, width, area, the ratio between the main axis of the object). Also, such parameters as the mean and variance of the depth inside the candidate region are taken into account to determine the regions most likely corresponding to the pickable objects of interest. Using these parameters, candidates are scored. If the highest-scoring candidate also surpasses a threshold score, its centre position is sent to the robot for the picking. This method is relatively hard to adjust for usage with new objects and does not classify the localized object, therefore, additionally object classification must be used for example object classification module.

The second approach is based on a convolutional neural network. When trained on specific objects, the machine learning-based approach (YOLO) detects all these objects in each frame if they are present. The object for picking is chosen by the confidence score which is returned by the YOLO model along with the bounding box coordinates and the class of the detected object. No additional classification has to be performed since object localization and classification are done simultaneously.

The YOLO-based approach allows more flexibility in the environment, as well as in shape, size, mutual similarity and complexity of objects. With good training, these aspects do not significantly impact object detection. But before the training, some preparation steps must be taken:

– acquire images of object piles,

– label objects in the images,

– set up a training environment or use a pre-built environment,

– use trained model weights to detect objects.

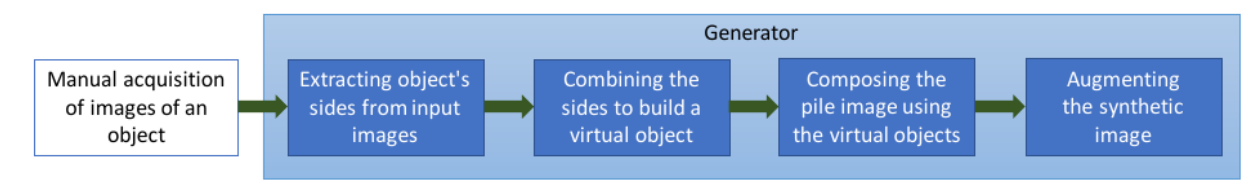

The labelling of real images can be done manually, however that is a long and laborious task. One must draw the bounding boxes over all pickable objects in all training samples. Therefore, EDI provides an approach of generating synthetic data that is labelled automatically. The automatic annotations include the position (bounding box) and the class of all unobstructed objects. The YOLO has been tested with simple shape objects (bottle, can), but it can be trained and adjusted for more complex shape objects.

Synthetic data generation

The depth sensor is connected to the PC that runs the ROS Melodic on Ubuntu 18.04. Currently, Intel RealSense d415, d435, Kinect v2 and Zivid depth cameras are supported, but any camera with ROS driver can be used, if the data can be published as PointCloud2. All the software for this module is implemented using Python 2.7 programming language. Robot and 3D camera must be extrinsically calibrated.

Inputs and outputs

All the data is transferred via a standard ROS transport system with publish/subscribe and request/response semantics. This module subscribes to RGB+Depth sensor data and produces pose of the object: position (x, y, z) and orientation in quaternion format (qw, qx, qy, qz) as a response to ROS service request.

Formats and standards

– ROS service communication to request the pose of the object.

– The sensor data is received from the sensor driver in sensor_msgs.PointCloud2.msg format.

– ROS, OpenCV, Tensorflow, PCL, Python standard libraries.

Training material

undefined

Owner (organization)

Institute of Electronics and Computer Science (EDI)

Trainings

To learn more about the solution, click on the link below to access the training on the Moodle platform

Object classification and detection