Data stream processing in Human-Robot-Collaboration

Name of demonstration

Data stream processing in Human-Robot-Collaboration

Main objective

The main objective of the AURORA use case is to demonstrate the feasibility and potential of data stream processing in HRC-supported finishing processes for car body models.

Short description

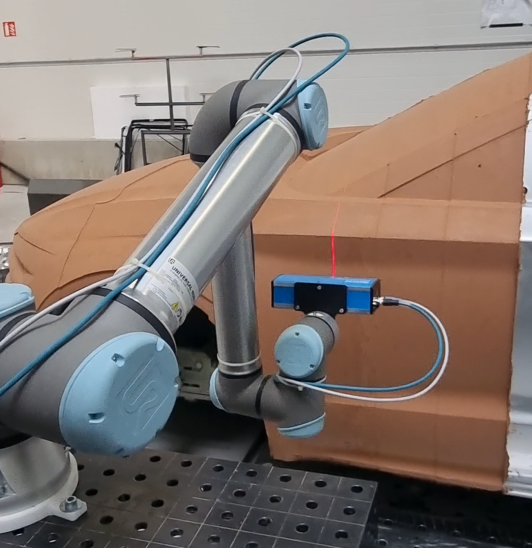

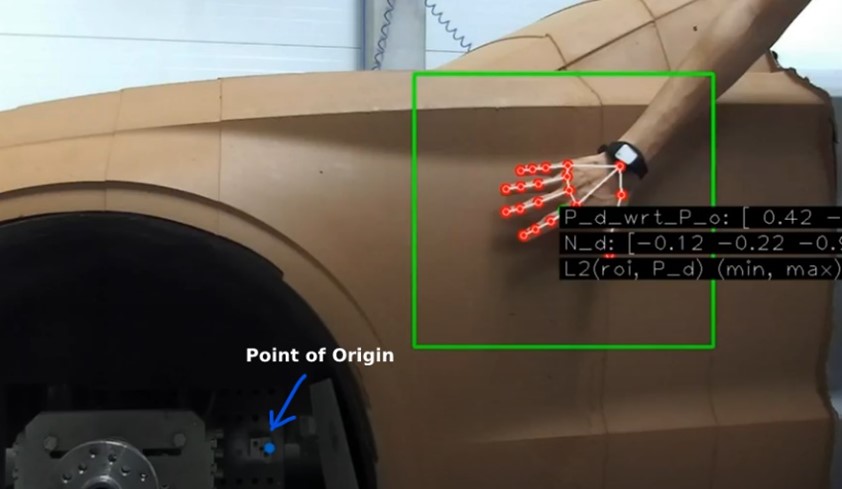

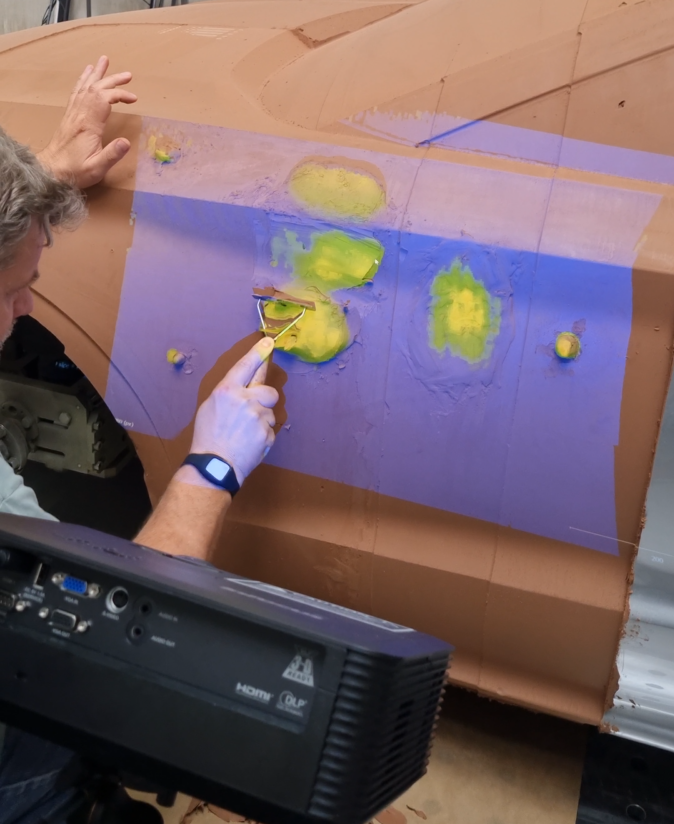

The project uses the application of Human Robot Collaboration (HRC) to allow an operator to utilize pre-defined hand gestures to command and direct a robotic scanner to specific regions of interest. The 3D scanner mounted on Robot produces the 3-D point cloud data for digital cloud comparison and projection of heat map onto the car clay surface. For gesture recognition, a smart IMU wearable device is used to capture the movements of human arm based on machine learning gesture recognition algorithm.

Owner of the demonstrator

Dr. Pierre T. Kirisci

Responsible person

Dr. Pierre Taner Kirisci (Project Coordinator), pierre.kirisci@pumacy.de

NACE

C - Manufacturing

Keywords

Robotics, Vision System, Machine Learning, Motion Planning, IoT - Cybersecurity - Artificial Intelligence - Predictive Maintenance – Revamping, human-robot collaboration, wearables, artificial intelligence, Collaborative Robotics, Finishing, automation, Smart Manufacturing.

Benefits for the users

The solution provides substantial benefits for the users in respect to increasing the process efficiency (e.g., reducing cycle times and post-work), the quality of products, and ergonomics of human workers.

Innovation

The innovation is evident when considering that collaborative robots in combination with AI based data analytics are yet rarely applied for supporting and optimizing finishing processes. It should also be noted that the solution provides a good basis for a whole spectrum of other applications (training & qualification, product/work quality assessment, process mining, teaching-in).

Risks and limitations

Applying human gesture tracking might be an issue for some users. Limitations are related to the fact that collaborative robots might be too slow for some processes, or too limited regarding the possible payloads. Also, the parameters of the industrial environment (such as lighting conditions) could play a role regarding the quality of the camera images and projections on the car body model.

Technology readiness level

5

Sectors of application

The project mainly focused on the finishing processes within the automotive sector. This particularly included the clay laying process on car body models. However, the solution can also support other finishing activities such as polishing and carving. .

Potential sectors of application

Industrial Assembly Processes in general from various sectors such as Automotive, Consumer Goods, Construction, as Maritime.

Patents / Licenses / Copyrights

Hardware / Software

Hardware:

UR10 Robot

3D machine vision TriSpectorP1000

Zed2i Camera

METAMOTIONS IMU sensor

Projector

Software:

UR10 control software

Data Analytics Tool

Python: For Machine Learning and Object detection

CloudCompare: For 3D point cloud comparison

Photos

Video

This video represents the implementation of Trinity project: AURORA. AURORA is a data stream processing experiment that supports process control and optimization of Human-Robot-Collaboration (HRC) workplaces through data stream processing and machine learning. The experiment is conducted on behalf of a finishing process for car exterior clay models.

https://youtu.be/dtqrNb7gK68 https://youtu.be/dRstX4WitQgNo modules assigned

Trainings

To learn more about the solution, click on the link below to access the training on the Moodle platform

Datastream processing in Human-Robot-Collaboration