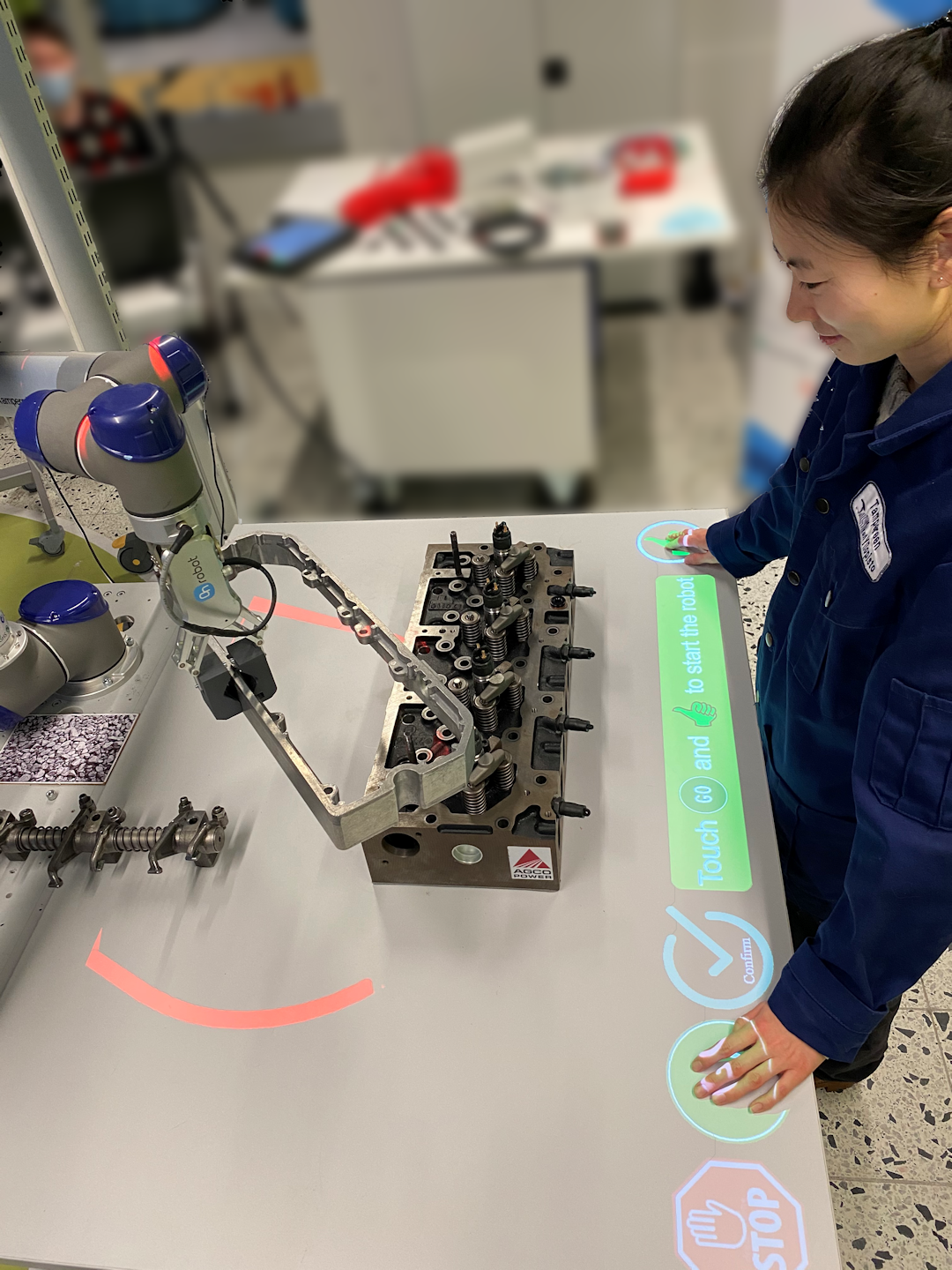

Collaborative Assembly with Vision-Based Safety System

Name of demonstration

Collaborative Assembly with Vision-Based Safety System

Main objective

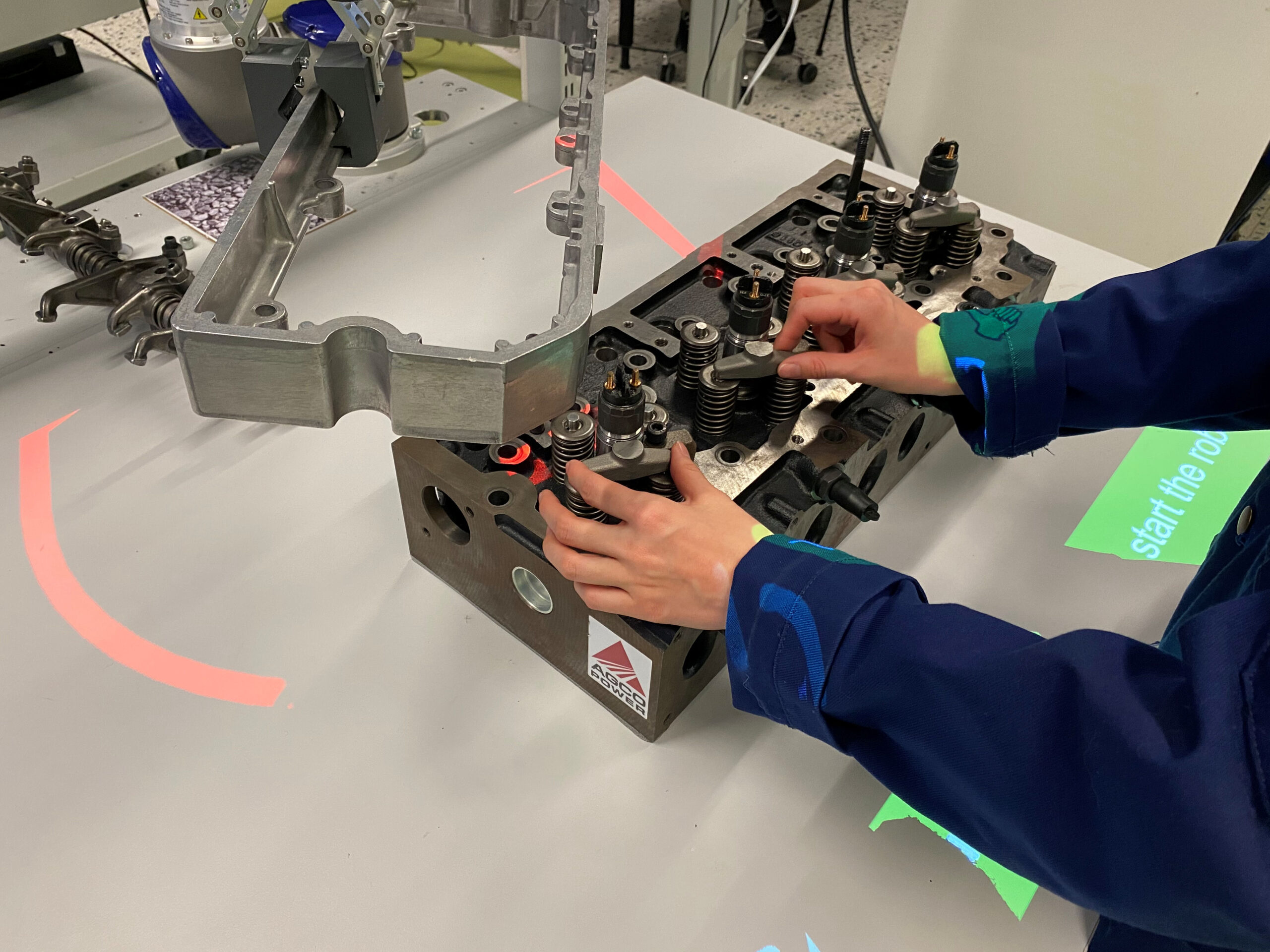

Demonstrating capabilities of vision-based safety system with projector and AR interface. Safety violations are captured through changes in 3D point map of working environment.

Short description

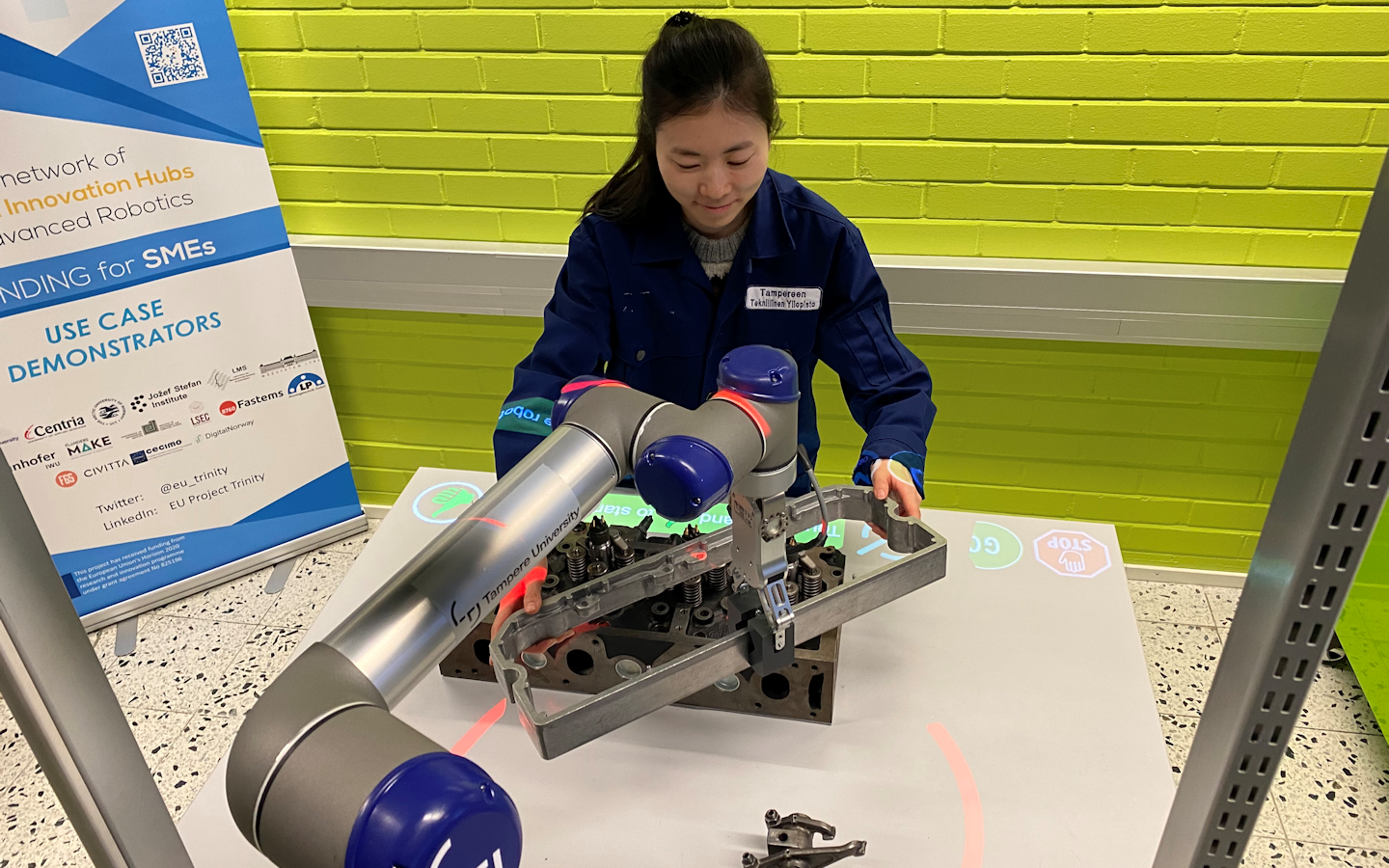

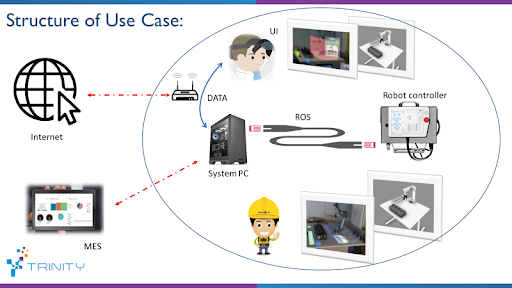

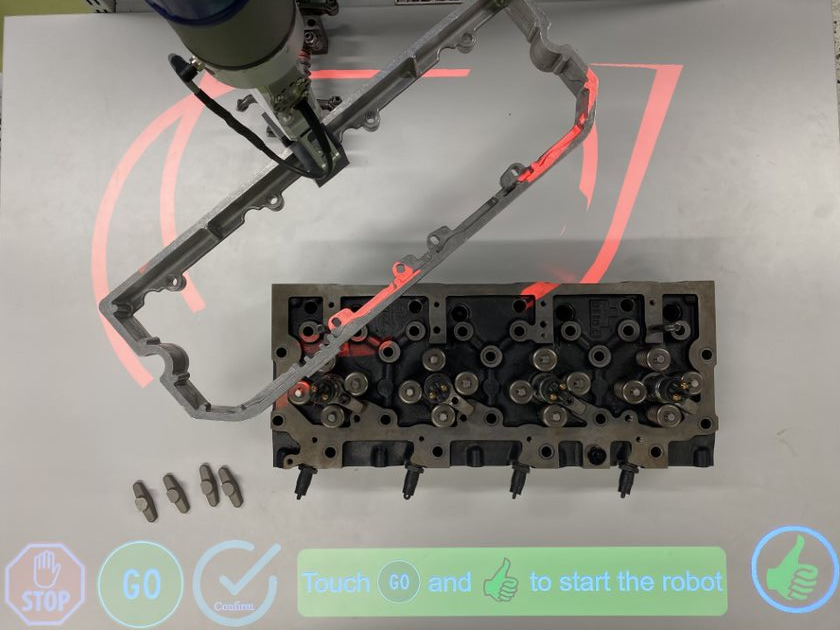

Demonstration of a vision-based safety system for human-robot collaboration in the assembly of diesel engine components. A dynamic 3D map of the working environment (robot, components + human) is continuously updated by depth sensor and utilized for safety and interaction between resources with virtual GUI. Robot’s working zone is augmented for the user to provide awareness of safety violation. Virtual GUI aims to provide instructions of the assembly sequence and map proper UI as the controller of the system.

Owner of the demonstrator

Tampere University

Responsible person

Project Researcher Dmitri Monakhov dmitrii.monakhov@tuni.fi

NACE

C29.3 - Manufacture of parts and accessories for motor vehicles

Keywords

Robotics , Safety, Augmented Reality , Manufacturing, Human-Robot Collaboration.

Potential users

SME’s, Research Institute and Academy

Benefits for the users

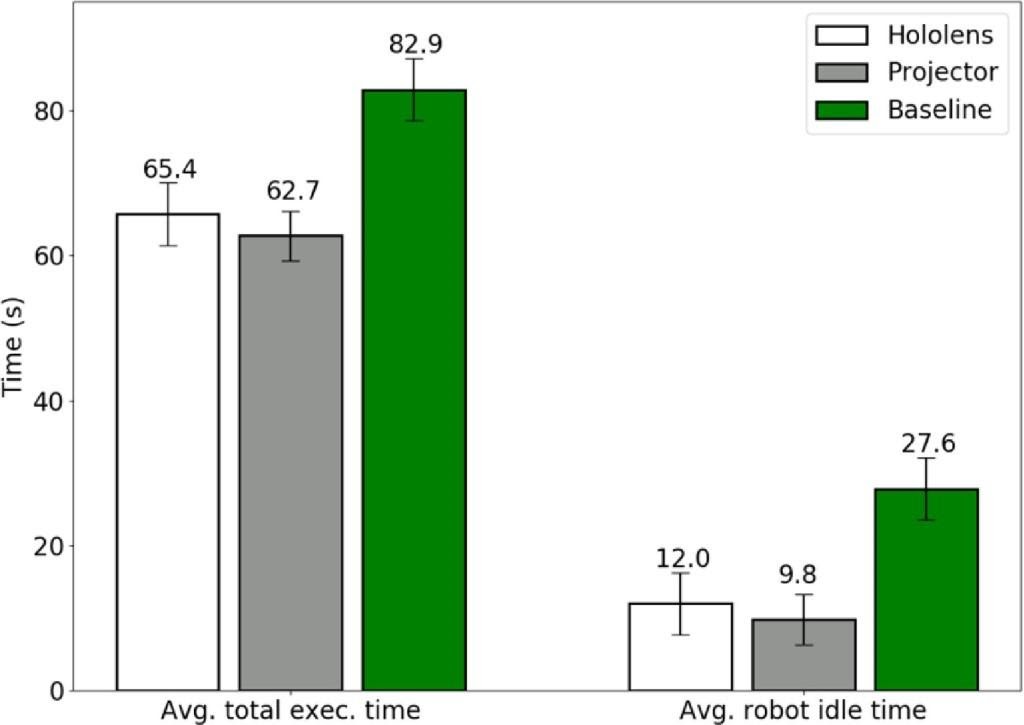

Improving user experience: the projector/AR interface can be used to provide instructions on how to execute the current task. Research have shown that tasks performed with the help of the safety system can be completed 21-24% faster. Robot idle time is reduced by 57-64%.

Innovation

This use-case utilizes projection-based and augmented reality head-mounted display technologies to overcome human error in the assembly sequence. It’s worth to mention, depth sensors such as Kinect track human body part whenever violation could happen. This is a new approach for collaborative work cell and provides a concept of safety vision-based for the operator. This approach studied in the academy research laboratory and 20 participants participated in the survey, where questionary contains 6 categories as following: Safety, Information Processing, Ergonomics, Autonomy, Competence, and Relatedness.

Risks and limitations

Software Malfunction: As the whole system operates by a computer, there are chances that software and connections through devices get malfunctioned. These can endanger the operator’s safety while the system stopped. As a result, it requires a back-up safety system is running all the time and malfunctioning this system results in protective stop of robot system.

Environmental disturbances: lighting conditions, dust electrical interferences can affect the depth sensor information, which can cause mistakes when estimating changes in the environment. In case of AR HMD’s field of view limitation should be encountered

Limitations of depth sensor: Scaling system to bigger environments with one depth sensor can cause point cloud to be not dense enough for accurate estimation. Synchronization between multiple depth sensors is needed.

Technology readiness level

6 - Safety approved sensors and systems are commercially available

Sectors of application

Manufacturing assembly, Quality Assurance of assembled components.

Potential sectors of application

Generation of safety zones in public environment

Hardware / Software

Hardware:

UR5 from Universal Robot family (tested on CB3 and UR software 3.5.4)

Standard 3LCD projector

Laptop/workstation

Software:

ROS Melodic

OpenCV (2.4.x, using the one from the official Ubuntu repositories is recommended)

PCL (1.8.x, using the one from the official Ubuntu repositories is recommended)

ROS Interface to the Kinect One (Kinect v2)

Photos

Video

Collaborative assembly with vision based safety system, a TRINITY use case

https://www.youtube.com/watch?v=BlnJK7sy_2Y&t=160sDepth-sensor Safety Model for HRC

Depth-based safety model for human-robot collaboration: Generates three different spatial zones in the shar...

LEARN MORE

Projection-based Interaction Interface for HRC

Interface Generation: The module projects interface that the user can interact with by placing a ha...

LEARN MORE

Wearable AR-based Interaction Interface for HRC

Generation of AR-based interface for human-robot collaboration: Increase the human operator awarene...

LEARN MORE

Trainings

To learn more about the solution, click on the link below to access the training on the Moodle platform

Collaborative assembly with vision-based safety system