Object Classification

Main functionalities

A deep convolutional neural network (CNN) is used to classify and sort objects. This is a robust and fast implementation of 112×112 image classification software for several classes based on specially optimized deep neural network architecture. When industrial robot picks the object, it is then classified using a convolutional neural network. In order to train the classifier to recognize new classes of objects, new training datasets must be provided.

Technical specifications

Training can be done on standard desktop PC, to ensure precision up to 99% training model requires at least 1000 images of the object. The maximum amount of the different object classes is not specified, the system has been tested with 7 different types of classes.

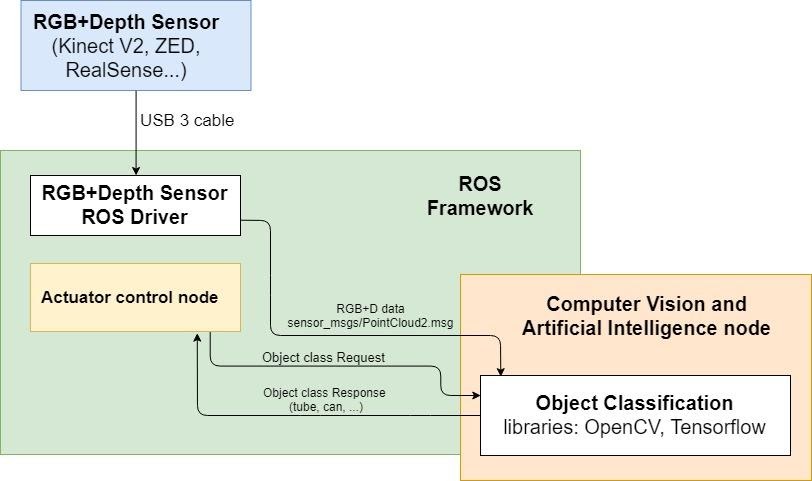

The depth sensor is connected to PC that runs the ROS Melodic on Ubuntu 18.04. Currently, Intel RealSense d415, d435, Kinect v2 and Zivid depth cameras are supported, but any camera with ROS driver can be used, if the data can be published as PointCloud2. All the software for this module is implemented using Python 2.7 programming language.

Inputs and outputs

All the data is transferred via a standard ROS transport system with publish/subscribe and request/response semantics. This module subscribes to RGB+Depth sensor data and produces requested object class.

Formats and standards

ROS service communication to request object class.

The sensor data is received from the sensor driver in sensor_msgs.PointCloud2.msg format.

ROS, OpenCV, Tensorflow, PCL, Python standard libraries.

Owner (organization)

Institute of Electronics and Computer Science (EDI)

Trainings

Under development