Collaborative (dis)assembly with augmented reality interaction

Name of demonstration

Collaborative (dis)assembly with augmented reality interaction

Main objective

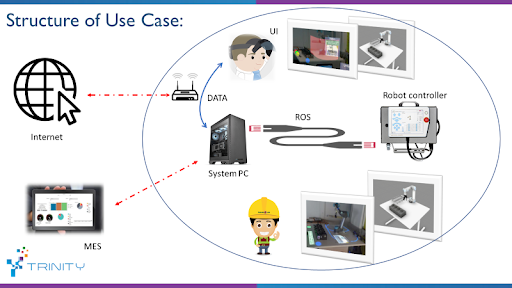

The main goal is to provide a safe and intuitive robotic interface for multimachine work environments, where the human worker operates together with traditional industrial robots (payload up to 50kg) and mobile robots. The safety is realized using an external vision system and AR-based technology.

Short description

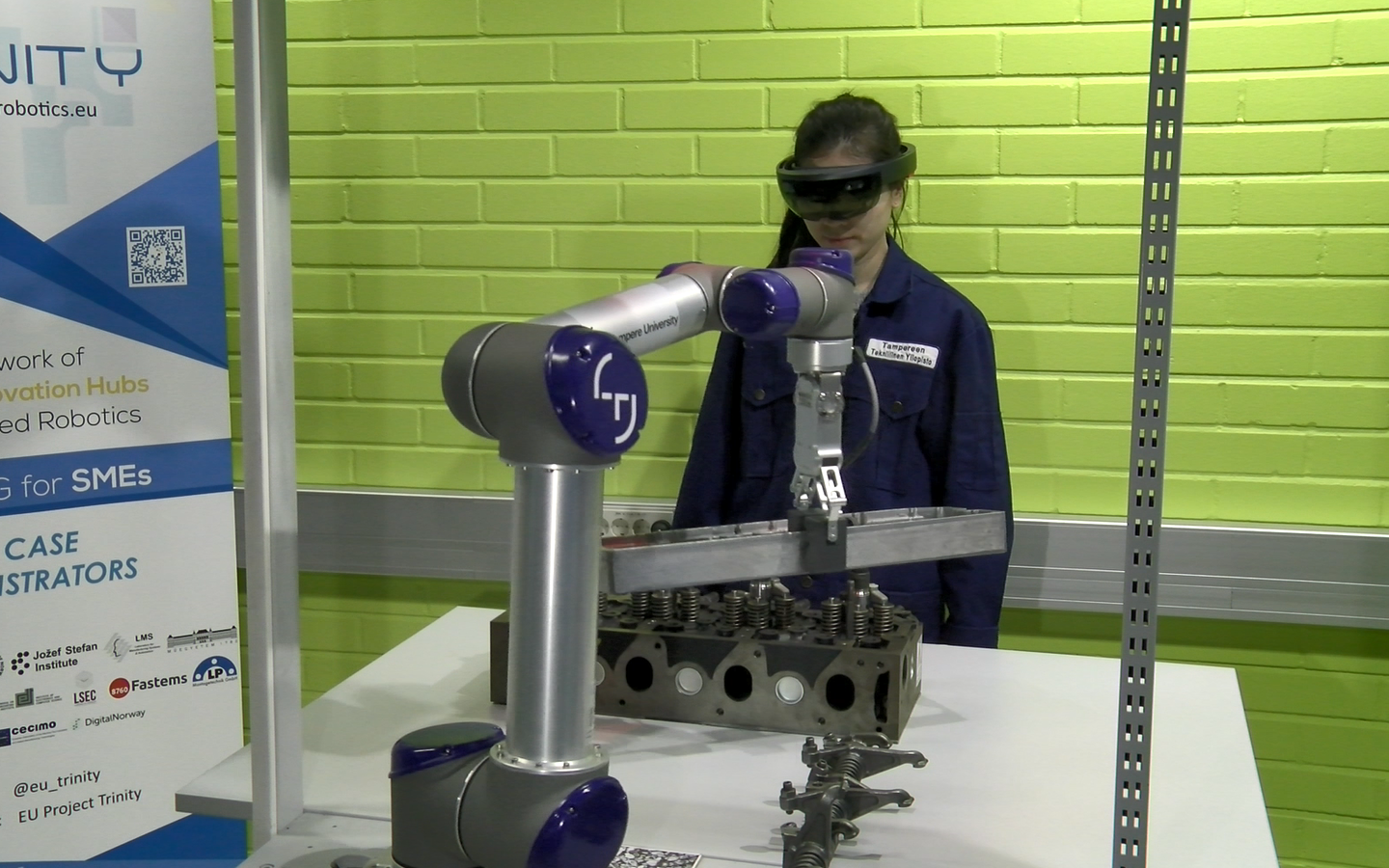

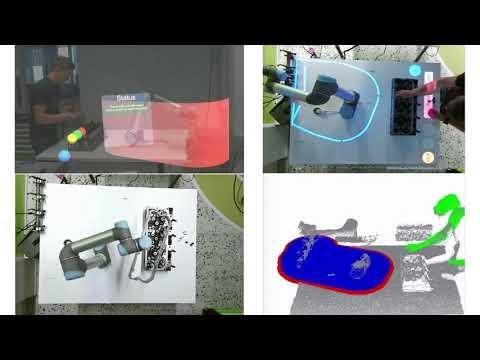

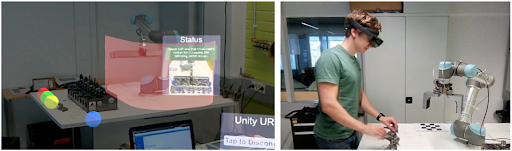

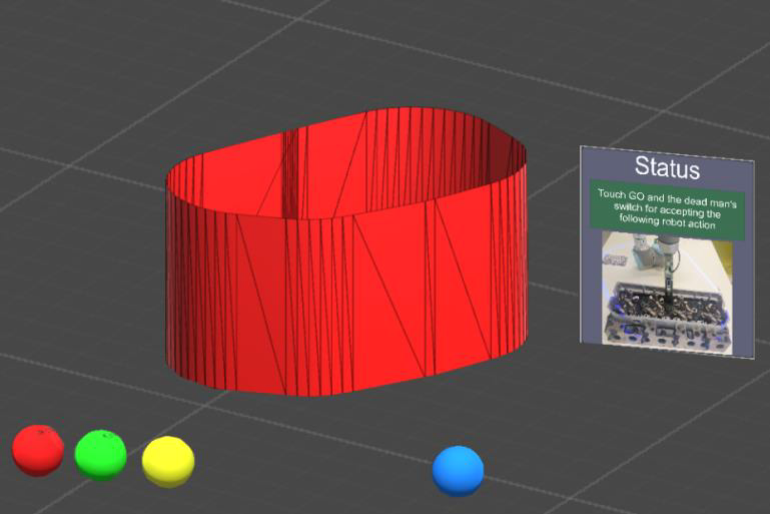

We introduce a robotic application consisting of multiple robot agents that share a common task with a human co-worker. The introduced system has an external vision system that can scan products and recognize their type and pose (position and orientation) in the shared workspace. The cell control system will make task allocation between robots and the operator. The operator can see the instructions regarding the disassembly and safety-related information using a wearable AR (Microsoft HoloLens). He/she communicates with the system using hand gestures and speech. Multi-camera/RGBD system monitors the workspace for safety violations and halts the robot or reduces its speed. The system is demonstrated in the disassembly of an industrial product.

The presented use case has been demonstrated on technology readiness level TRL 6. The concept has been proven functional. However as the technology was build based on commercial hardware no longer supported, the further development of this use case has been suspended. Related technology modules and technical solutions can be found as technical modules in use case “Collaborative Assembly with Vision-Based Safety System” (Please link demonstration name to https://trinityrobotics.eu/use-cases/collaborative-assembly-with-vision-based-safety-system/)

Owner of the demonstrator

Tampere University

Responsible person

Morteza Dianatfar morteza.dianatfar@tuni.fi

NACE

C26 - Manufacture of computer, electronic and optical products

Keywords

Robotics, Human-Robot Collaboration, Safety, Augmented Reality, Manufacturing.

Potential users

SME’s: The technology can be adopted for improving safety during manufacturing processes, as well as making safety zones more distinguishable to the workers Research Institute and Academy: the system can be used as a framework for teaching the safety regulations in human-robot collaboration. It can be also used for creating interactive practical exercises by using AR to create instructions for each task, as well as keeping safety regulations in check.

Benefits for the users

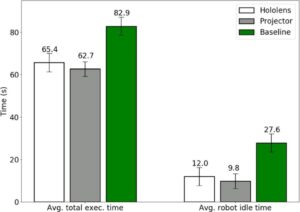

Increasing safety awareness: Using vision-based safety system can decrease violation of safety which is related to decreasing the human error. As a result, this can lead to reducing production cycle time where a human doesn’t stop the production line by these mistakes. Here, proper GUI notifies human with violating robot working zone and assist human to avoid this error. UI consists of instruction of assembly in front of the operator which will assist the operator to focus on the task replace of getting distracted to read manual in the traditional method. This type of interaction helps the operator to perceive the task sequence faster and avoid mistakes in the assembly of components. Based on user test and survey, the application of this use case compared to the baseline where the robot was not moving in the same workspace with an operator. The result of this experience can be explained by measuring robot idle time and total execution time.

Figure 1 AR-based interaction for human-robot collaborative manufacturing (Antti Hietanen, Roel Pieters, Minna Lanz, Jyrki Latokartano, Joni-Kristian Kämäräinen)

Improving user experience: the AR interface can be used to provide instructions on how to execute the current task. Research have shown that tasks performed with the help of the safety system can be completed 21-24% faster. Robot idle time is reduced by 57-64%.

Innovation

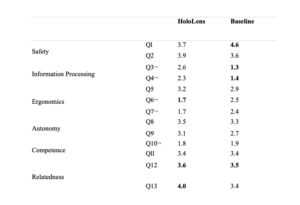

This use-case utilizes augmented reality head-mounted display technologies to overcome human error in the assembly sequence. It’s worth to mention, depth sensors such as Kinect track human body part whenever violation could happen. This is a new approach for collaborative work cell and provides a concept of safety vision-based for the operator. This approach studied in the academy research laboratory and 20 participants have participated in the survey, where questionary contains 6 categories as following: Safety, Information Processing, Ergonomics, Autonomy, Competence, and Relatedness.

Table 1. Average scores for the question (Q1-Q13). Higher is better except for those marked with “¬”. The best result emphasized (multiple if no statistical significance).

Risks and limitations

Battery Shortage: As the whole application runs on HMD, it needs to consider that this technology has limited battery capacity. Therefore, it requires recharge the headset during working shift, which it can increase idle time of production line.

Software Malfunction: As the monitoring of system operates by a computer, there are chances that software and connections through devices get malfunctioned. These can endanger the operator’s safety while the system stopped. As a result, it requires a back-up safety system is running all the time and malfunctioning this system results in protective stop of robot system.

Field of View: Field of View and monitoring the working area depends on the specification of HMD. Based on these limitations, it can distract the operator to access the UI of working space and lose focus on the task itself. In this case, Microsoft Hololens v1 has limited FOV and operator require to turn around his/her head to observe the GUI.

Limitations of depth sensor: Scaling system to bigger environments with one depth sensor can cause point cloud to be not dense enough for accurate estimation. Synchronization between multiple depth sensors is needed.

Technology readiness level

6 - Safety approved sensors and systems are commercially available

Sectors of application

Collaborative manufacturing. This technology improves the safety of robot cell environment and provides tools for setting UI and instructions for the manufacturing task. , Manufacturing assembly on mid-heavy components. User can benefit by collaborating with robot where robot picks heavier components. Human intelligence is utilized in complex part. The system will be safe where robot is inherently safe designed and vision-based safety system assists human to avoid error and possible injuries. , Quality Assurance of assembled components, Robot system can detect and sort obvious, easier defects, while human inspects less certain cases. The vision-based safety system assists human in their task and allows smooth cooperation between human and robot. .

Patents / Licenses / Copyrights

The module will be licensed under BSD-3-Clause licence.

Hardware / Software

Hardware:

UR5 from Universal Robot family (tested on CB3 and UR software 3.5.4)

Microsoft Hololens v1

Laptop/workstation

Software:

ROS Melodic

OpenCV (2.4.x, using the one from the official Ubuntu repositories is recommended)

PCL (1.8.x, using the one from the official Ubuntu repositories is recommended)

ROS Interface to the Kinect One (Kinect v2)

Photos

No modules assigned

Trainings

To learn more about the solution, click on the link below to access the training on the Moodle platform

Collaborative disassembly with augmented reality interaction